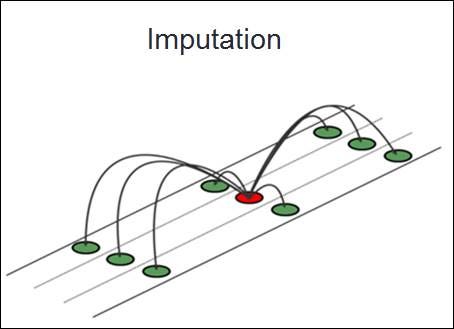

Handling missing data is a common and important problem in data science. Missing values can occur for a variety of causes, including data input mistakes, sensor failures, or data corruption, resulting in skewed findings and decreased model effectiveness. Data imputation is the process of filling in the missing values. In this article, we'll look at several data imputation strategies using Python.

Understanding Missing Data

Before getting into imputation approaches, it's important to understand the different forms of missing data:

1. MCAR (Missing Completely at Random): The chance of a missing data point is unaffected by any other data.

2. MAR (Missing at Random): The missingness is tied to the observed data rather than the missing data itself.

3. MNAR (Missing Not at Random): Missingness is linked to missing data.

Understanding these kinds aids in selecting the proper imputation approach.

Techniques for Data Imputation

1. Mean, Median, and Mode Imputation

These basic imputation approaches work well with numerical (mean and median) and categorical (mode) data.

Implementation

from sklearn.impute import SimpleImputer

mean_imputer = SimpleImputer(strategy='mean')

df['column_with_nan'] = mean_imputer.fit_transform(df[['column_with_nan']])

2. K-Nearest Neighbor (KNN) Imputation

KNN imputation restores missing values by comparing 'k' comparable occurrences and utilizing their values to fill in the gaps.

Implementation

from sklearn.impute import KNNImputer

knn_imputer = KNNImputer(n_neighbors=5)

df_knn_imputed = pd.DataFrame(knn_imputer.fit_transform(df), columns=df.columns)

3. Multivariate Imputation using Chained Equations (MICE)

MICE models each variable with missing values as a function of other factors, iterating over the estimations.

Implementation

from sklearn.experimental import enable_iterative_imputer

from sklearn.impute import IterativeImputer

mice_imputer = IterativeImputer()

df_mice_imputed = pd.DataFrame(mice_imputer.fit_transform(df), columns=df.columns)

4. Applying Machine Learning Algorithms for Imputation

Advanced imputation approaches employ machine learning algorithms to anticipate missing variables based on known values.

Implementation

from sklearn.ensemble import RandomForestRegressor

# For numerical columns

def fill_missing_rf(df, target_column):

known = df[df[target_column].notnull()]

unknown = df[df[target_column].isnull()]

X = known.drop(columns=[target_column])

y = known[target_column]

model = RandomForestRegressor()

model.fit(X, y)

predicted = model.predict(unknown.drop(columns=[target_column]))

df.loc[df[target_column].isnull(), target_column] = predicted

return df

df = fill_missing_rf(df, 'column_with_nan')

Conclusion

Data imputation is an important stage in the data preparation workflow. While simple approaches such as mean, median, and mode imputation are quick and uncomplicated, more advanced methods such as KNN, MICE, and machine learning-based imputations can produce superior results, particularly for complex datasets. Understanding and selecting the appropriate imputation approach may greatly improve data analysis and model performance.

Following this approach and experimenting with various imputation strategies will allow you to properly handle missing data while also ensuring the robustness of your data analysis. Have fun imputing! :-)