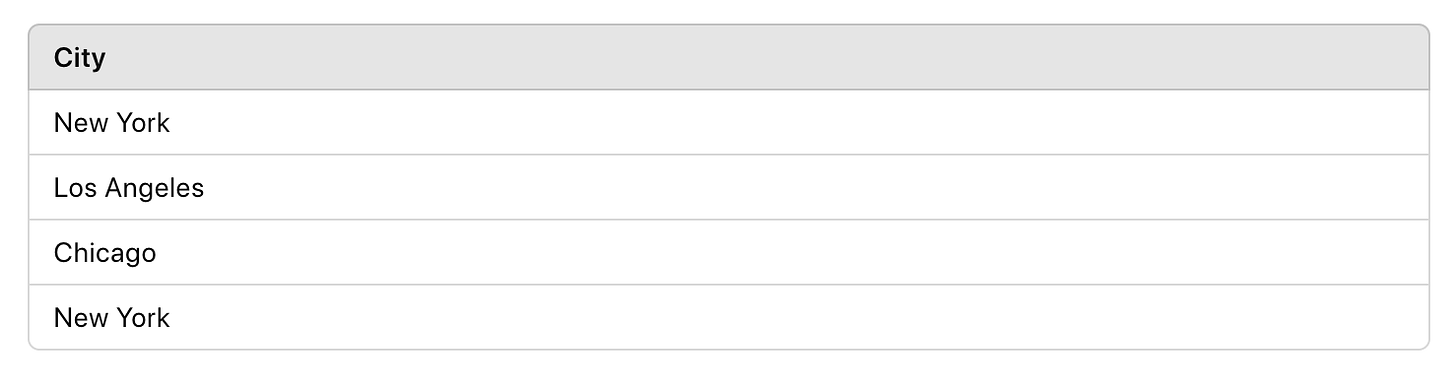

Label Encoding

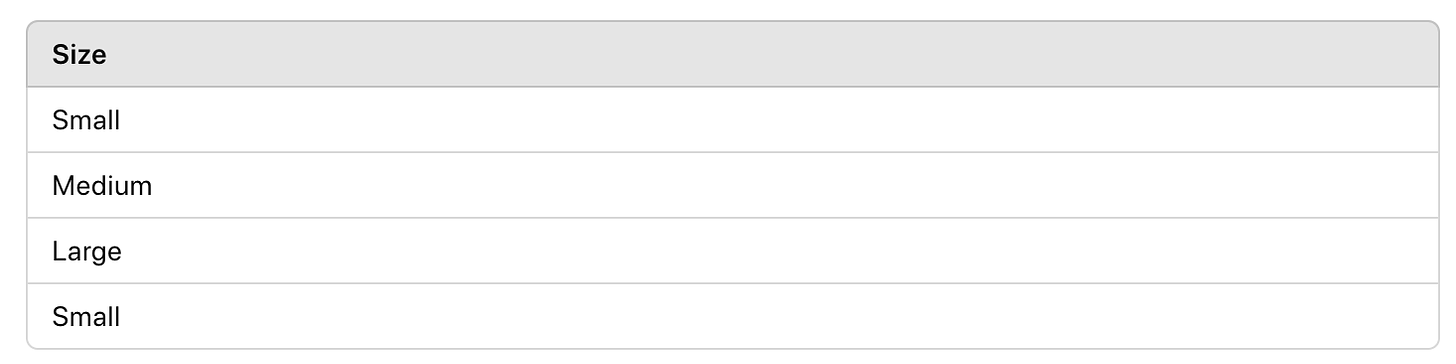

Label Encoding assigns a unique integer to each category. This method is simple and works well for ordinal data.

Example: Consider the categorical feature "Size" with categories: Small, Medium, Large.

Input Data:

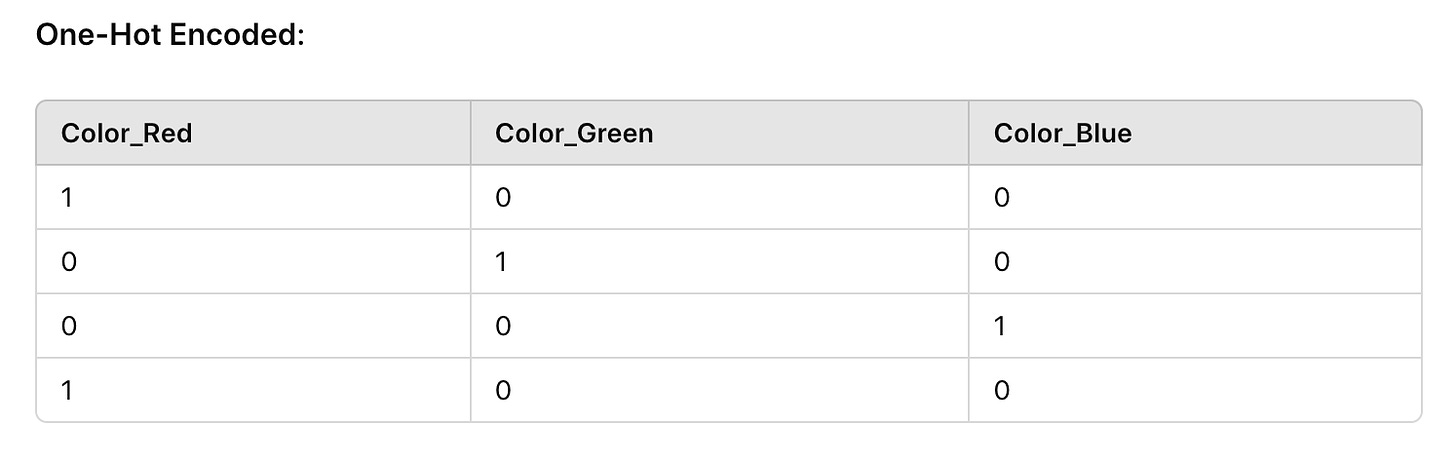

One-Hot Encoding

One-Hot Encoding creates a new binary column for each category. This method is suitable for nominal data.

Input Data:

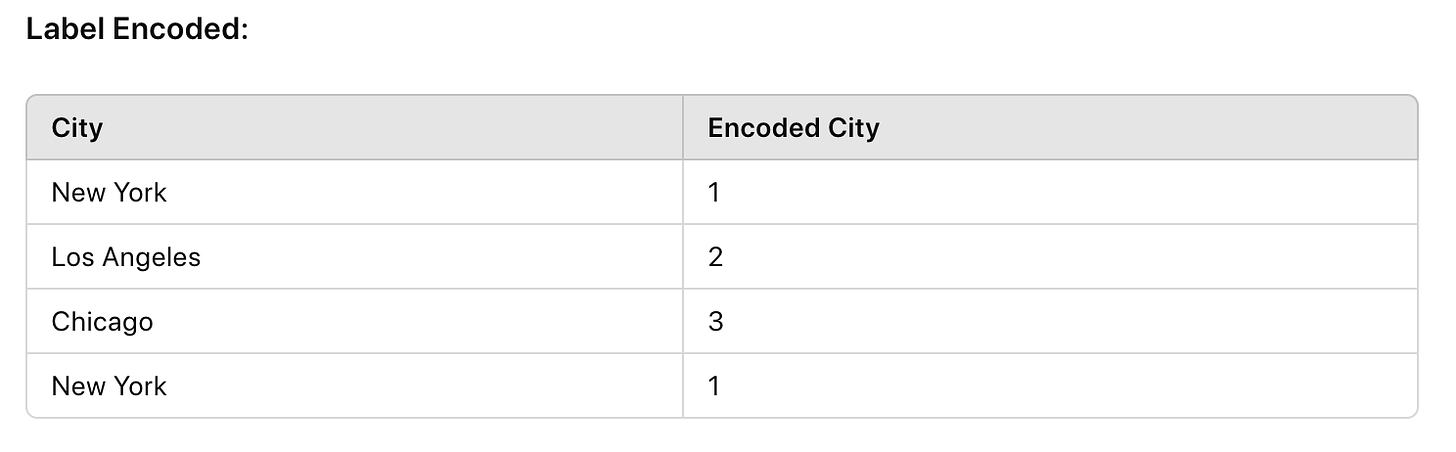

Binary Encoding

Binary Encoding combines Label Encoding and One-Hot Encoding. Categories are first label encoded then converted to binary and each bit gets its own column.

Input Data:

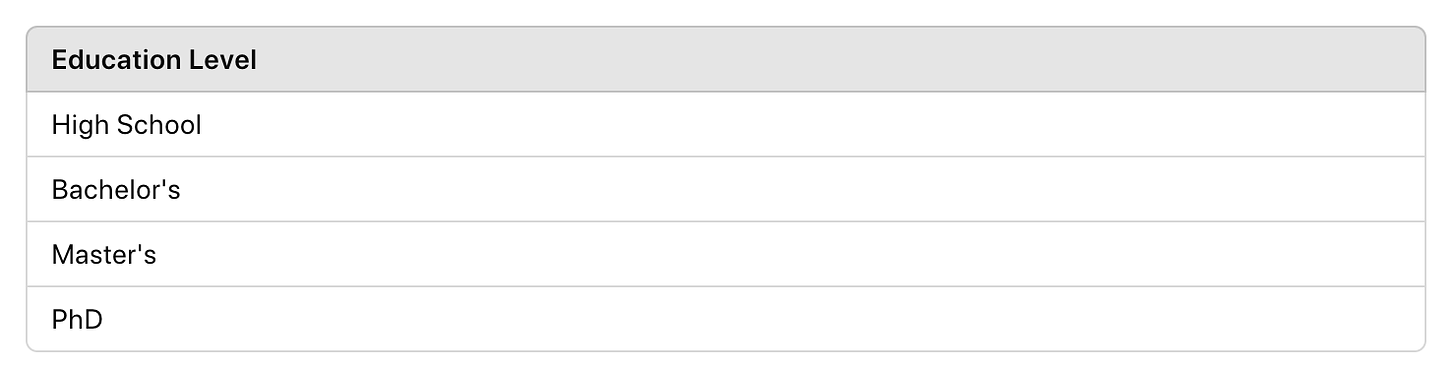

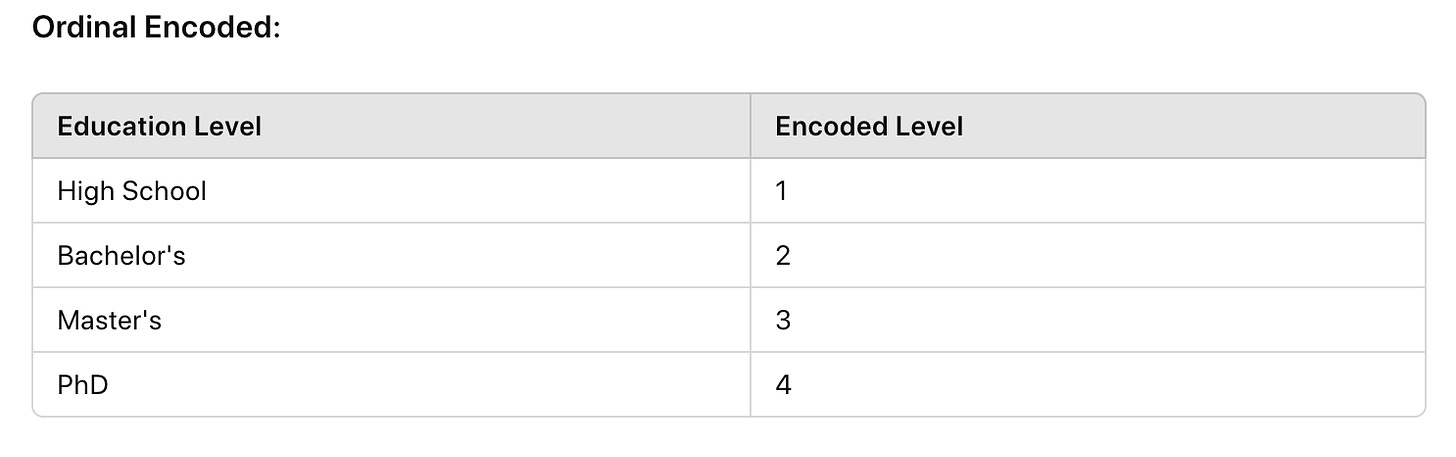

Ordinal Encoding

Ordinal Encoding is used when categories have a meaningful order. Each category is mapped to an integer that reflects its order.

Input Data:

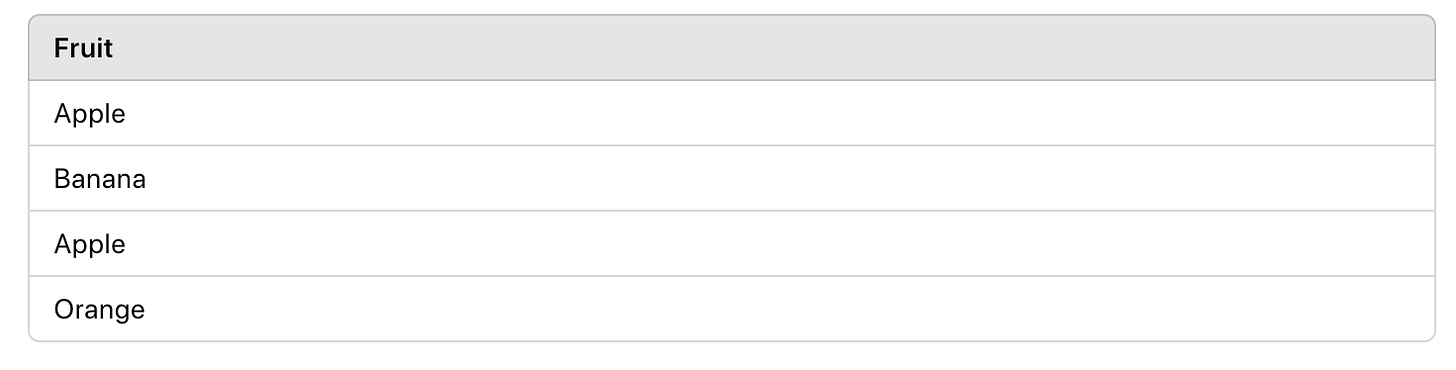

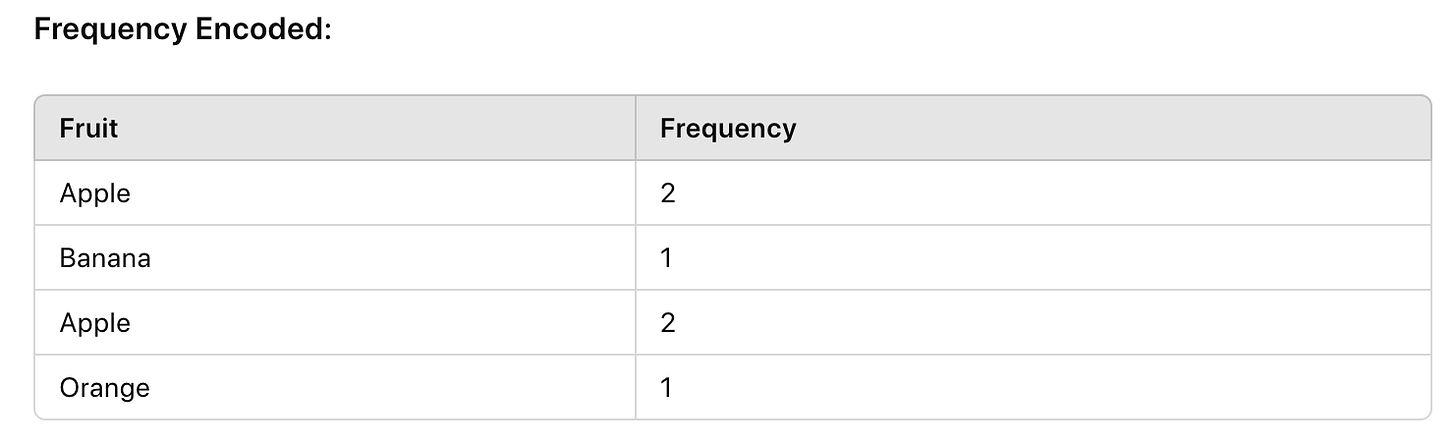

Frequency Encoding

Frequency Encoding replaces categories with their frequency counts.

Input Data:

Why Encoding is Important in Machine Learning

Encoding categorical data into numerical format is a critical preprocessing step in machine learning. Here are several reasons why encoding is essential:

1. Machine Learning Algorithms Require Numerical Input

Most machine learning algorithms, particularly those involving mathematical operations like distance calculations, require numerical inputs. Algorithms such as linear regression, decision trees, and neural networks cannot directly process categorical data. Encoding converts categorical data into a numerical format that these algorithms can interpret.

2. Preserves Information and Relationships

Proper encoding techniques ensure that the information and relationships within the categorical data are preserved. For example, ordinal encoding respects the inherent order of ordinal categories, while one-hot encoding prevents the model from assuming an implicit order in nominal data. This preservation is crucial for maintaining the integrity of the data and for the model to learn correctly.

3. Enhances Model Performance

Encoding can significantly enhance the performance of machine learning models. Properly encoded data can help the model to learn more effectively, leading to better predictions. Techniques like target encoding and frequency encoding can provide the model with additional context, potentially improving its predictive power.

4. Reduces Dimensionality

Some encoding techniques, like binary encoding, help in reducing the dimensionality of the data. High-dimensional data can lead to overfitting, where the model performs well on training data but poorly on unseen data. Reducing dimensionality helps in building more generalizable models.

5. Handles High Cardinality

High cardinality refers to categorical variables with a large number of unique values, such as user IDs or product codes. Encoding techniques like target encoding and frequency encoding can effectively handle high cardinality, making the data more manageable and useful for machine learning models.

6. Improves Model Interpretability

Encoded data can improve the interpretability of the model. For instance, using ordinal encoding for education levels (e.g., High School, Bachelor's, Master's, PhD) provides a clear numerical representation of the hierarchy, making it easier to interpret the model’s output.

7. Facilitates Data Integration

Encoding categorical data facilitates the integration of datasets from different sources. When combining datasets, ensuring that categorical variables are encoded consistently helps maintain data integrity and prevents errors in downstream analysis.

AI Image of the day: MidJourney

Prompt: Cat in space